Convex Optimization – Introduction

This course is useful for the students who want to solve non-linear optimization problems that arise in various engineering and scientific applications. This course starts with basic theory of linear programming and will introduce the concepts of convex sets and functions and related terminologies to explain various theorems that are required to solve the non linear programming problems. This course will introduce various algorithms that are used to solve such problems. These type of problems arise in various applications including machine learning, optimization problems in electrical engineering, etc. It requires the students to have prior knowledge of high school maths concepts and calculus.

In this course, the students will learn to solve the optimization problems like $min fleft ( x right )$ subject to some constraints.

These problems are easily solvable if the function $fleft ( x right )$ is a linear function and if the constraints are linear. Then it is called a linear programming problem (LPP). But if the constraints are non-linear, then it is difficult to solve the above problem. Unless we can plot the functions in a graph, then try to analyse the optimization can be one way, but we can”t plot a function if it”s beyond three dimensions. Hence there comes the techniques of non-linear programming or convex programming to solve such problems. In these tutorial, we will focus on learning such techniques and in the end, a few algorithms to solve such problems. first we will bring the notion of convex sets which is the base of the convex programming problems. Then with the introduction of convex functions, we will some important theorems to solve these problems and some algorithms based on these theorems.

Terminologies

-

The space $mathbb{R}^n$ − It is an n-dimensional vector with real numbers, defined as follows − $mathbb{R}^n=left { left ( x_1,x_2,…,x_n right )^{tau }:x_1,x_2,….,x_n in mathbb{R} right }$

-

The space $mathbb{R}^{mXn}$ − It is a set of all real values matrices of order $mXn$.

Convex Optimization – Linear Programming

Methodology

Linear Programming also called Linear Optimization, is a technique which is used to solve mathematical problems in which the relationships are linear in nature. the basic nature of Linear Programming is to maximize or minimize an objective function with subject to some constraints. The objective function is a linear function which is obtained from the mathematical model of the problem. The constraints are the conditions which are imposed on the model and are also linear.

- From the given question, find the objective function.

- find the constraints.

- Draw the constraints on a graph.

- find the feasible region, which is formed by the intersection of all the constraints.

- find the vertices of the feasible region.

- find the value of the objective function at these vertices.

- The vertice which either maximizes or minimizes the objective function (according to the question) is the answer.

Examples

Step 1 − Maximize $5x+3y$ subject to

$x+yleq 2$,

$3x+yleq 3$,

$xgeq 0 :and :ygeq 0$

Solution −

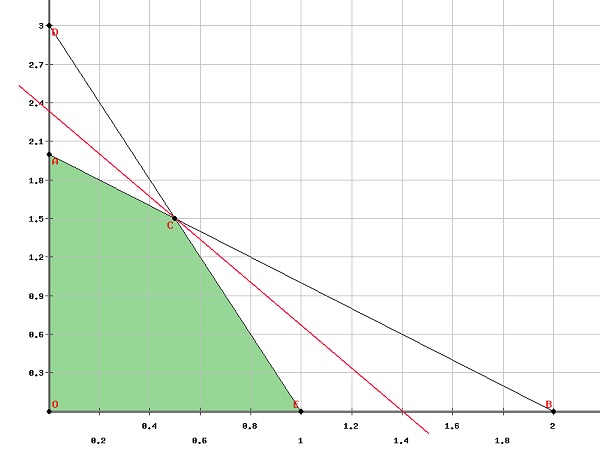

The first step is to find the feasible region on a graph.

Clearly from the graph, the vertices of the feasible region are

$left ( 0, 0 right )left ( 0, 2 right )left ( 1, 0 right )left ( frac{1}{2}, frac{3}{2} right )$

Let $fleft ( x, y right )=5x+3y$

Putting these values in the objective function, we get −

$fleft ( 0, 0 right )$=0

$fleft ( 0, 2 right )$=6

$fleft ( 1, 0 right )$=5

$fleft ( frac{1}{2}, frac{3}{2} right )$=7

Therefore, the function maximizes at $left ( frac{1}{2}, frac{3}{2} right )$

Step 2 − A watch company produces a digital and a mechanical watch. Long-term projections indicate an expected demand of at least 100 digital and 80 mechanical watches each day. Because of limitations on production capacity, no more than 200 digital and 170 mechanical watches can be made daily. To satisfy a shipping contract, a total of at least 200 watches much be shipped each day.

If each digital watch sold results in a $$2$ loss, but each mechanical watch produces a $$5$ profit, how many of each type should be made daily to maximize net profits?

Solution −

Let $x$ be the number of digital watches produced

$y$ be the number of mechanical watches produced

According to the question, at least 100 digital watches are to be made daily and maximaum 200 digital watches can be made.

$Rightarrow 100 leq :xleq 200$

Similarly, at least 80 mechanical watches are to be made daily and maximum 170 mechanical watches can be made.

$Rightarrow 80 leq :yleq 170$

Since at least 200 watches are to be produced each day.

$Rightarrow x +yleq 200$

Since each digital watch sold results in a $$2$ loss, but each mechanical watch produces a $$5$ profit,

Total profit can be calculated as

$Profit =-2x + 5y$

And we have to maximize the profit, Therefore, the question can be formulated as −

Maximize $-2x + 5y$ subject to

$100 :leq x:leq 200$

$80 :leq y:leq 170$

$x+y:leq 200$

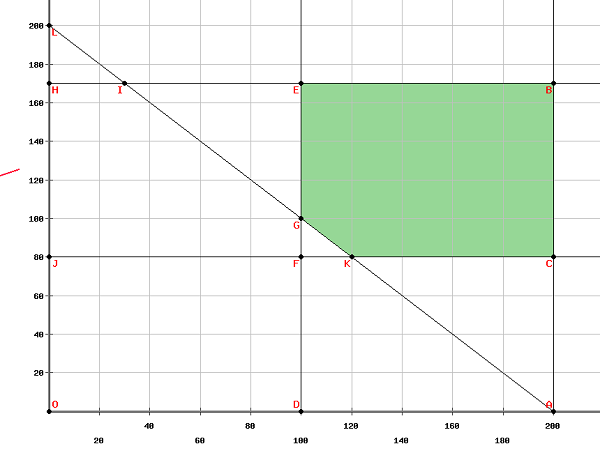

Plotting the above equations in a graph, we get,

The vertices of the feasible region are

$left ( 100, 170right )left ( 200, 170right )left ( 200, 180right )left ( 120, 80right ) and left ( 100, 100right )$

The maximum value of the objective function is obtained at $left ( 100, 170right )$ Thus, to maximize the net profits, 100 units of digital watches and 170 units of mechanical watches should be produced.

Convex Optimization – Norm

A norm is a function that gives a strictly positive value to a vector or a variable.

Norm is a function $f:mathbb{R}^nrightarrow mathbb{R}$

The basic characteristics of a norm are −

Let $X$ be a vector such that $Xin mathbb{R}^n$

-

$left | x right |geq 0$

-

$left | x right |= 0 Leftrightarrow x= 0forall x in X$

-

$left |alpha x right |=left | alpha right |left | x right |forall 😡 in X and :alpha :is :a :scalar$

-

$left | x+y right |leq left | x right |+left | y right | forall x,y in X$

-

$left | x-y right |geq left | left | x right |-left | y right | right |$

By definition, norm is calculated as follows −

-

$left | x right |_1=displaystylesumlimits_{i=1}^nleft | x_i right |$

-

$left | x right |_2=left ( displaystylesumlimits_{i=1}^nleft | x_i right |^2 right )^{frac{1}{2}}$

-

$left | x right |_p=left ( displaystylesumlimits_{i=1}^nleft | x_i right |^p right )^{frac{1}{p}},1 leq p leq infty$

Norm is a continuous function.

Proof

By definition, if $x_nrightarrow x$ in $XRightarrow fleft ( x_n right )rightarrow fleft ( x right ) $ then $fleft ( x right )$ is a constant function.

Let $fleft ( x right )=left | x right |$

Therefore, $left | fleft ( x_n right )-fleft ( x right ) right |=left | left | x_n right | -left | x right |right |leq left | left | x_n-x right | :right |$

Since $x_n rightarrow x$ thus, $left | x_n-x right |rightarrow 0$

Therefore $left | fleft ( x_n right )-fleft ( x right ) right |leq 0Rightarrow left | fleft ( x_n right )-fleft ( x right ) right |=0Rightarrow fleft ( x_n right )rightarrow fleft ( x right )$

Hence, norm is a continuous function.

Convex Optimization – Inner Product

Inner product is a function which gives a scalar to a pair of vectors.

Inner Product − $f:mathbb{R}^n times mathbb{R}^nrightarrow kappa$ where $kappa$ is a scalar.

The basic characteristics of inner product are as follows −

Let $X in mathbb{R}^n$

-

$left langle x,x right ranglegeq 0, forall x in X$

-

$left langle x,x right rangle=0Leftrightarrow x=0, forall x in X$

-

$left langle alpha x,y right rangle=alpha left langle x,y right rangle,forall alpha in kappa : and: forall x,y in X$

-

$left langle x+y,z right rangle =left langle x,z right rangle +left langle y,z right rangle, forall x,y,z in X$

-

$left langle overline{y,x} right rangle=left ( x,y right ), forall x, y in X$

Note −

-

Relationship between norm and inner product: $left | x right |=sqrt{left ( x,x right )}$

-

$forall x,y in mathbb{R}^n,left langle x,y right rangle=x_1y_1+x_2y_2+…+x_ny_n$

Examples

1. find the inner product of $x=left ( 1,2,1 right ): and : y=left ( 3,-1,3 right )$

Solution

$left langle x,y right rangle =x_1y_1+x_2y_2+x_3y_3$

$left langle x,y right rangle=left ( 1times3 right )+left ( 2times-1 right )+left ( 1times3 right )$

$left langle x,y right rangle=3+left ( -2 right )+3$

$left langle x,y right rangle=4$

2. If $x=left ( 4,9,1 right ),y=left ( -3,5,1 right )$ and $z=left ( 2,4,1 right )$, find $left ( x+y,z right )$

Solution

As we know, $left langle x+y,z right rangle=left langle x,z right rangle+left langle y,z right rangle$

$left langle x+y,z right rangle=left ( x_1z_1+x_2z_2+x_3z_3 right )+left ( y_1z_1+y_2z_2+y_3z_3 right )$

$left langle x+y,z right rangle=left { left ( 4times 2 right )+left ( 9times 4 right )+left ( 1times1 right ) right }+$

$left { left ( -3times2 right )+left ( 5times4 right )+left ( 1times 1right ) right }$

$left langle x+y,z right rangle=left ( 8+36+1 right )+left ( -6+20+1 right )$

$left langle x+y,z right rangle=45+15$

$left langle x+y,z right rangle=60$

Convex Optimization – Minima and Maxima

Local Minima or Minimize

$bar{x}in :S$ is said to be local minima of a function $f$ if $fleft ( bar{x} right )leq fleft ( x right ),forall x in N_varepsilon left ( bar{x} right )$ where $N_varepsilon left ( bar{x} right )$ means neighbourhood of $bar{x}$, i.e., $N_varepsilon left ( bar{x} right )$ means $left | x-bar{x} right |

Local Maxima or Maximizer

$bar{x}in :S$ is said to be local maxima of a function $f$ if $fleft ( bar{x} right )geq fleft ( x right ), forall x in N_varepsilon left ( bar{x} right )$ where $N_varepsilon left ( bar{x} right )$ means neighbourhood of $bar{x}$, i.e., $N_varepsilon left ( bar{x} right )$ means $left | x-bar{x} right |

Global minima

$bar{x}in :S$ is said to be global minima of a function $f$ if $fleft ( bar{x} right )leq fleft ( x right ), forall x in S$

Global maxima

$bar{x}in :S$ is said to be global maxima of a function $f$ if $fleft ( bar{x} right )geq fleft ( x right ), forall x in S$

Examples

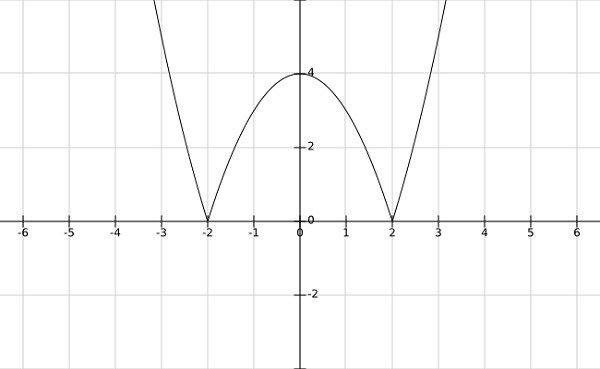

Step 1 − find the local minima and maxima of $fleft ( bar{x} right )=left | x^2-4 right |$

Solution −

From the graph of the above function, it is clear that the local minima occurs at $x= pm 2$ and local maxima at $x = 0$

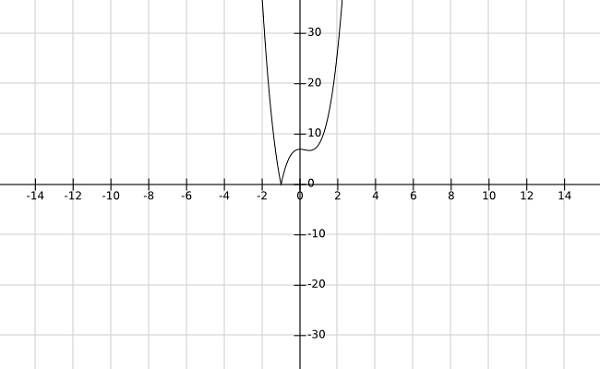

Step 2 − find the global minima af the function $fleft (x right )=left | 4x^3-3x^2+7 right |$

Solution −

From the graph of the above function, it is clear that the global minima occurs at $x=-1$.

Convex Optimization – Convex Set

Let $Ssubseteq mathbb{R}^n$ A set S is said to be convex if the line segment joining any two points of the set S also belongs to the S, i.e., if $x_1,x_2 in S$, then $lambda x_1+left ( 1-lambda right )x_2 in S$ where $lambda inleft ( 0,1 right )$.

Note −

- The union of two convex sets may or may not be convex.

- The intersection of two convex sets is always convex.

Proof

Let $S_1$ and $S_2$ be two convex set.

Let $S_3=S_1 cap S_2$

Let $x_1,x_2 in S_3$

Since $S_3=S_1 cap S_2$ thus $x_1,x_2 in S_1$and $x_1,x_2 in S_2$

Since $S_i$ is convex set, $forall$ $i in 1,2,$

Thus $lambda x_1+left ( 1-lambda right )x_2 in S_i$ where $lambda in left ( 0,1 right )$

Therfore, $lambda x_1+left ( 1-lambda right )x_2 in S_1cap S_2$

$Rightarrow lambda x_1+left ( 1-lambda right )x_2 in S_3$

Hence, $S_3$ is a convex set.

-

Weighted average of the form $displaystylesumlimits_{i=1}^k lambda_ix_i$,where $displaystylesumlimits_{i=1}^k lambda_i=1$ and $lambda_igeq 0,forall i in left [ 1,k right ]$ is called conic combination of $x_1,x_2,….x_k.$

-

Weighted average of the form $displaystylesumlimits_{i=1}^k lambda_ix_i$, where $displaystylesumlimits_{i=1}^k lambda_i=1$ is called affine combination of $x_1,x_2,….x_k.$

-

Weighted average of the form $displaystylesumlimits_{i=1}^k lambda_ix_i$ is called linear combination of $x_1,x_2,….x_k.$

Examples

Step 1 − Prove that the set $S=left { x in mathbb{R}^n:Cxleq alpha right }$ is a convex set.

Solution

Let $x_1$ and $x_2 in S$

$Rightarrow Cx_1leq alpha$ and $:and :Cx_2leq alpha$

To show:$::y=left ( lambda x_1+left ( 1-lambda right )x_2 right )in S :forall :lambda inleft ( 0,1 right )$

$Cy=Cleft ( lambda x_1+left ( 1-lambda right )x_2 right )=lambda Cx_1+left ( 1-lambda right )Cx_2$

$Rightarrow Cyleq lambda alpha+left ( 1-lambda right )alpha$

$Rightarrow Cyleq alpha$

$Rightarrow yin S$

Therefore, $S$ is a convex set.

Step 2 − Prove that the set $S=left { left ( x_1,x_2 right )in mathbb{R}^2:x_{1}^{2}leq 8x_2 right }$ is a convex set.

Solution

Let $x,y in S$

Let $x=left ( x_1,x_2 right )$ and $y=left ( y_1,y_2 right )$

$Rightarrow x_{1}^{2}leq 8x_2$ and $y_{1}^{2}leq 8y_2$

To show − $lambda x+left ( 1-lambda right )yin SRightarrow lambda left ( x_1,x_2 right )+left (1-lambda right )left ( y_1,y_2 right ) in SRightarrow left [ lambda x_1+left ( 1- lambda)y_2] in Sright ) right ]$

$Now, left [lambda x_1+left ( 1-lambda right )y_1 right ]^{2}=lambda ^2x_{1}^{2}+left ( 1-lambda right )^2y_{1}^{2}+2 lambdaleft ( 1-lambda right )x_1y_1$

But $2x_1y_1leq x_{1}^{2}+y_{1}^{2}$

Therefore,

$left [ lambda x_1 +left ( 1-lambda right )y_1right ]^{2}leq lambda ^2x_{1}^{2}+left ( 1- lambda right )^2y_{1}^{2}+2 lambdaleft ( 1- lambda right )left ( x_{1}^{2}+y_{1}^{2} right )$

$Rightarrow left [ lambda x_1+left ( 1-lambda right )y_1 right ]^{2}leq lambda x_{1}^{2}+left ( 1- lambda right )y_{1}^{2}$

$Rightarrow left [ lambda x_1+left ( 1-lambda right )y_1 right ]^{2}leq 8lambda x_2+8left ( 1- lambda right )y_2$

$Rightarrow left [ lambda x_1+left ( 1-lambda right )y_1 right ]^{2}leq 8left [lambda x_2+left ( 1- lambda right )y_2 right ]$

$Rightarrow lambda x+left ( 1- lambda right )y in S$

Step 3 − Show that a set $S in mathbb{R}^n$ is convex if and only if for each integer k, every convex combination of any k points of $S$ is in $S$.

Solution

Let $S$ be a convex set. then, to show;

$c_1x_1+c_2x_2+…..+c_kx_k in S, displaystylesumlimits_{1}^k c_i=1,c_igeq 0, forall i in 1,2,….,k$

Proof by induction

For $k=1,x_1 in S, c_1=1 Rightarrow c_1x_1 in S$

For $k=2,x_1,x_2 in S, c_1+c_2=1$ and Since S is a convex set

$Rightarrow c_1x_1+c_2x_2 in S.$

Let the convex combination of m points of S is in S i.e.,

$c_1x_1+c_2x_2+…+c_mx_m in S,displaystylesumlimits_{1}^m c_i=1 ,c_i geq 0, forall i in 1,2,…,m$

Now, Let $x_1,x_2….,x_m,x_{m+1} in S$

Let $x=mu_1x_1+mu_2x_2+…+mu_mx_m+mu_{m+1}x_{m+1}$

Let $x=left ( mu_1+mu_2+…+mu_m right )frac{mu_1x_1+mu_2x_2+mu_mx_m}{mu_1+mu_2+………+mu_m}+mu_{m+1}x_{m+1}$

Let $y=frac{mu_1x_1+mu_2x_2+…+mu_mx_m}{mu_1+mu_2+………+mu_m}$

$Rightarrow x=left ( mu_1+mu_2+…+mu_m right )y+mu_{m+1}x_{m+1}$

Now $y in S$ because the sum of the coefficients is 1.

$Rightarrow x in S$ since S is a convex set and $y,x_{m+1} in S$

Hence proved by induction.

Convex Optimization – affine Set

A set $A$ is said to be an affine set if for any two distinct points, the line passing through these points lie in the set $A$.

Note −

-

$S$ is an affine set if and only if it contains every affine combination of its points.

-

Empty and singleton sets are both affine and convex set.

For example, solution of a linear equation is an affine set.

Proof

Let S be the solution of a linear equation.

By definition, $S=left { x in mathbb{R}^n:Ax=b right }$

Let $x_1,x_2 in SRightarrow Ax_1=b$ and $Ax_2=b$

To prove : $Aleft [ theta x_1+left ( 1-theta right )x_2 right ]=b, forall theta inleft ( 0,1 right )$

$Aleft [ theta x_1+left ( 1-theta right )x_2 right ]=theta Ax_1+left ( 1-theta right )Ax_2=theta b+left ( 1-theta right )b=b$

Thus S is an affine set.

Theorem

If $C$ is an affine set and $x_0 in C$, then the set $V= C-x_0=left { x-x_0:x in C right }$ is a subspace of C.

Proof

Let $x_1,x_2 in V$

To show: $alpha x_1+beta x_2 in V$ for some $alpha,beta$

Now, $x_1+x_0 in C$ and $x_2+x_0 in C$ by definition of V

Now, $alpha x_1+beta x_2+x_0=alpha left ( x_1+x_0 right )+beta left ( x_2+x_0 right )+left ( 1-alpha -beta right )x_0$

But $alpha left ( x_1+x_0 right )+beta left ( x_2+x_0 right )+left ( 1-alpha -beta right )x_0 in C$ because C is an affine set.

Therefore, $alpha x_1+beta x_2 in V$

Hence proved.

Convex Optimization – Hull

The convex hull of a set of points in S is the boundary of the smallest convex region that contain all the points of S inside it or on its boundary.

OR

Let $Ssubseteq mathbb{R}^n$ The convex hull of S, denoted $Coleft ( S right )$ by is the collection of all convex combination of S, i.e., $x in Coleft ( S right )$ if and only if $x in displaystylesumlimits_{i=1}^n lambda_ix_i$, where $displaystylesumlimits_{1}^n lambda_i=1$ and $lambda_i geq 0 forall x_i in S$

Remark − Conves hull of a set of points in S in the plane defines a convex polygon and the points of S on the boundary of the polygon defines the vertices of the polygon.

Theorem $Coleft ( S right )= left { x:x=displaystylesumlimits_{i=1}^n lambda_ix_i,x_i in S, displaystylesumlimits_{i=1}^n lambda_i=1,lambda_i geq 0 right }$ Show that a convex hull is a convex set.

Proof

Let $x_1,x_2 in Coleft ( S right )$, then $x_1=displaystylesumlimits_{i=1}^n lambda_ix_i$ and $x_2=displaystylesumlimits_{i=1}^n lambda_gamma x_i$ where $displaystylesumlimits_{i=1}^n lambda_i=1, lambda_igeq 0$ and $displaystylesumlimits_{i=1}^n gamma_i=1,gamma_igeq0$

For $theta in left ( 0,1 right ),theta x_1+left ( 1-theta right )x_2=theta displaystylesumlimits_{i=1}^n lambda_ix_i+left ( 1-theta right )displaystylesumlimits_{i=1}^n gamma_ix_i$

$theta x_1+left ( 1-theta right )x_2=displaystylesumlimits_{i=1}^n lambda_i theta x_i+displaystylesumlimits_{i=1}^n gamma_ileft ( 1-theta right )x_i$

$theta x_1+left ( 1-theta right )x_2=displaystylesumlimits_{i=1}^nleft [ lambda_itheta +gamma_ileft ( 1-theta right ) right ]x_i$

Considering the coefficients,

$displaystylesumlimits_{i=1}^nleft [ lambda_itheta +gamma_ileft ( 1-theta right ) right ]=theta displaystylesumlimits_{i=1}^n lambda_i+left ( 1-theta right )displaystylesumlimits_{i=1}^ngamma_i=theta +left ( 1-theta right )=1$

Hence, $theta x_1+left ( 1-theta right )x_2 in Coleft ( S right )$

Thus, a convex hull is a convex set.

Caratheodory Theorem

Let S be an arbitrary set in $mathbb{R}^n$.If $x in Coleft ( S right )$, then $x in Coleft ( x_1,x_2,….,x_n,x_{n+1} right )$.

Proof

Since $x in Coleft ( Sright )$, then $x$ is representated by a convex combination of a finite number of points in S, i.e.,

$x=displaystylesumlimits_{j=1}^k lambda_jx_j,displaystylesumlimits_{j=1}^k lambda_j=1, lambda_j geq 0$ and $x_j in S, forall j in left ( 1,k right )$

If $k leq n+1$, the result obtained is obviously true.

If $k geq n+1$, then $left ( x_2-x_1 right )left ( x_3-x_1 right ),….., left ( x_k-x_1 right )$ are linearly dependent.

$Rightarrow exists mu _j in mathbb{R}, 2leq jleq k$ (not all zero) such that $displaystylesumlimits_{j=2}^k mu _jleft ( x_j-x_1 right )=0$

Define $mu_1=-displaystylesumlimits_{j=2}^k mu _j$, then $displaystylesumlimits_{j=1}^k mu_j x_j=0, displaystylesumlimits_{j=1}^k mu_j=0$

where not all $mu_j”s$ are equal to zero. Since $displaystylesumlimits_{j=1}^k mu_j=0$, at least one of the $mu_j > 0,1 leq j leq k$

Then, $x=displaystylesumlimits_{1}^k lambda_j x_j+0$

$x=displaystylesumlimits_{1}^k lambda_j x_j- alpha displaystylesumlimits_{1}^k mu_j x_j$

$x=displaystylesumlimits_{1}^kleft ( lambda_j- alphamu_j right )x_j $

Choose $alpha$ such that $alpha=minleft { frac{lambda_j}{mu_j}, mu_jgeq 0 right }=frac{lambda_j}{mu _j},$ for some $i=1,2,…,k$

If $mu_jleq 0, lambda_j-alpha mu_jgeq 0$

If $mu_j> 0, then :frac{lambda _j}{mu_j}geq frac{lambda_i}{mu _i}=alpha Rightarrow lambda_j-alpha mu_jgeq 0, j=1,2,…k$

In particular, $lambda_i-alpha mu_i=0$, by definition of $alpha$

$x=displaystylesumlimits_{j=1}^k left ( lambda_j- alphamu_jright )x_j$,where

$lambda_j- alphamu_jgeq0$ and $displaystylesumlimits_{j=1}^kleft ( lambda_j- alphamu_jright )=1$ and $lambda_i- alphamu_i=0$

Thus, x can be representated as a convex combination of at most (k-1) points.

This reduction process can be repeated until x is representated as a convex combination of (n+1) elements.

Convex Optimization – Weierstrass Theorem

Let S be a non empty, closed and bounded set (also called compact set) in $mathbb{R}^n$ and let $f:Srightarrow mathbb{R} $ be a continuous function on S, then the problem min $left { fleft ( x right ):x in S right }$ attains its minimum.

Proof

Since S is non-empty and bounded, there exists a lower bound.

$alpha =Infleft { fleft ( x right ):x in S right }$

Now let $S_j=left { x in S:alpha leq fleft ( x right ) leq alpha +delta ^jright } forall j=1,2,…$ and $delta in left ( 0,1 right )$

By the definition of infimium, $S_j$ is non-empty, for each $j$.

Choose some $x_j in S_j$ to get a sequence $left { x_j right }$ for $j=1,2,…$

Since S is bounded, the sequence is also bounded and there is a convergent subsequence $left { y_j right }$, which converges to $hat{x}$. Hence $hat{x}$ is a limit point and S is closed, therefore, $hat{x} in S$. Since f is continuous, $fleft ( y_i right )rightarrow fleft ( hat{x} right )$.

Since $alpha leq fleft ( y_i right )leq alpha+delta^k, alpha=displaystylelim_{krightarrow infty}fleft ( y_i right )=fleft ( hat{x} right )$

Thus, $hat{x}$ is the minimizing solution.

Remarks

There are two important necessary conditions for Weierstrass Theorem to hold. These are as follows −

-

Step 1 − The set S should be a bounded set.

Consider the function fleft ( x right )=x$.

It is an unbounded set and it does have a minima at any point in its domain.

Thus, for minima to obtain, S should be bounded.

-

Step 2 − The set S should be closed.

Consider the function $fleft ( x right )=frac{1}{x}$ in the domain left ( 0,1 right ).

This function is not closed in the given domain and its minima also does not exist.

Hence, for minima to obtain, S should be closed.

Convex Optimization – Closest Point Theorem

Let S be a non-empty closed convex set in $mathbb{R}^n$ and let $ynotin S$, then $exists$ a point $bar{x}in S$ with minimum distance from y, i.e.,$left | y-bar{x} right | leq left | y-x right | forall x in S.$

Furthermore, $bar{x}$ is a minimizing point if and only if $left ( y-hat{x} right )^{T}left ( x-hat{x} right )leq 0$ or $left ( y-hat{x}, x-hat{x} right )leq 0$

Proof

Existence of closest point

Since $Sne phi,exists$ a point $hat{x}in S$ such that the minimum distance of S from y is less than or equal to $left | y-hat{x} right |$.

Define $hat{S}=S cap left { x:left | y-x right |leq left | y-hat{x} right | right }$

Since $ hat{S}$ is closed and bounded, and since norm is a continuous function, then by Weierstrass theorem, there exists a minimum point $hat{x} in S$ such that $left | y-hat{x} right |=Infleft { left | y-x right |,x in S right }$

Uniqueness

Suppose $bar{x} in S$ such that $left | y-hat{x} right |=left | y-hat{x} right |= alpha$

Since S is convex, $frac{hat{x}+bar{x}}{2} in S$

But, $left | y-frac{hat{x}-bar{x}}{2} right |leq frac{1}{2}left | y-hat{x} right |+frac{1}{2}left | y-bar{x} right |=alpha$

It can”t be strict inequality because $hat{x}$ is closest to y.

Therefore, $left | y-hat{x} right |=mu left | y-hat{x} right |$, for some $mu$

Now $left | mu right |=1.$ If $mu=-1$, then $left ( y-hat{x} right )=-left ( y-hat{x} right )Rightarrow y=frac{hat{x}+bar{x}}{2} in S$

But $y in S$. Hence contradiction. Thus $mu=1 Rightarrow hat{x}=bar{x}$

Thus, minimizing point is unique.

For the second part of the proof, assume $left ( y-hat{x} right )^{tau }left ( x-bar{x} right )leq 0$ for all $xin S$

Now,

$left | y-x right |^{2}=left | y-hat{x}+ hat{x}-xright |^{2}=left | y-hat{x} right |^{2}+left |hat{x}-x right |^{2}+2left (hat{x}-x right )^{tau }left ( y-hat{x} right )$

$Rightarrow left | y-x right |^{2}geq left | y-hat{x} right |^{2}$ because $left | hat{x}-x right |^{2}geq 0$ and $left ( hat{x}- xright )^{T}left ( y-hat{x} right )geq 0$

Thus, $hat{x}$ is minimizing point.

Conversely, assume $hat{x}$ is minimizimg point.

$Rightarrow left | y-x right |^{2}geq left | y-hat{x} right |^2 forall x in S$

Since S is convex set.

$Rightarrow lambda x+left ( 1-lambda right )hat{x}=hat{x}+lambdaleft ( x-hat{x} right ) in S$ for $x in S$ and $lambda in left ( 0,1 right )$

Now, $left | y-hat{x}-lambdaleft ( x-hat{x} right ) right |^{2}geq left | y-hat{x} right |^2$

And

$left | y-hat{x}-lambdaleft ( x-hat{x} right ) right |^{2}=left | y-hat{x} right |^{2}+lambda^2left | x-hat{x} right |^{2}-2lambdaleft ( y-hat{x} right )^{T}left ( x-hat{x} right )$

$Rightarrow left | y-hat{x} right |^{2}+lambda^{2}left | x-hat{x} right |-2 lambdaleft ( y-hat{x} right )^{T}left ( x-hat{x} right )geq left | y-hat{x} right |^{2}$

$Rightarrow 2 lambdaleft ( y-hat{x} right )^{T}left ( x-hat{x} right )leq lambda^2left | x-hat{x} right |^2$

$Rightarrow left ( y-hat{x} right )^{T}left ( x-hat{x} right )leq 0$

Hence Proved.

Fundamental Separation Theorem

Let S be a non-empty closed, convex set in $mathbb{R}^n$ and $y notin S$. Then, there exists a non zero vector $p$ and scalar $beta$ such that $p^T y>beta$ and $p^T x

Proof

Since S is non empty closed convex set and $y notin S$ thus by closest point theorem, there exists a unique minimizing point $hat{x} in S$ such that

$left ( x-hat{x} right )^Tleft ( y-hat{x} right )leq 0 forall x in S$

Let $p=left ( y-hat{x} right )neq 0$ and $beta=hat{x}^Tleft ( y-hat{x} right )=p^That{x}$.

Then $left ( x-hat{x} right )^Tleft ( y-hat{x} right )leq 0$

$Rightarrow left ( y-hat{x} right )^Tleft ( x-hat{x} right )leq 0$

$Rightarrow left ( y-hat{x} right )^Txleq left ( y-hat{x} right )^T hat{x}=hat{x}^Tleft ( y-hat{x} right )$ i,e., $p^Tx leq beta$

Also, $p^Ty-beta=left ( y-hat{x} right )^Ty-hat{x}^T left ( y-hat{x} right )$

$=left ( y-hat{x} right )^T left ( y-x right )=left | y-hat{x} right |^{2}>0$

$Rightarrow p^Ty> beta$

This theorem results in separating hyperplanes. The hyperplanes based on the above theorem can be defined as follows −

Let $S_1$ and $S_2$ are be non-empty subsets of $mathbb{R}$ and $H=left { X:A^TX=b right }$ be a hyperplane.

-

The hyperplane H is said to separate $S_1$ and $S_2$ if $A^TX leq b forall X in S_1$ and $A_TX geq b forall X in S_2$

-

The hyperplane H is said to strictly separate $S_1$ and $S_2$ if $A^TX b forall X in S_2$

-

The hyperplane H is said to strongly separate $S_1$ and $S_2$ if $A^TX leq b forall X in S_1$ and $A_TX geq b+ varepsilon forall X in S_2$, where $varepsilon$ is a positive scalar.

Convex Optimization – Cones

A non empty set C in $mathbb{R}^n$ is said to be cone with vertex 0 if $x in CRightarrow lambda x in C forall lambda geq 0$.

A set C is a convex cone if it convex as well as cone.

For example, $y=left | x right |$ is not a convex cone because it is not convex.

But, $y geq left | x right |$ is a convex cone because it is convex as well as cone.

Note − A cone C is convex if and only if for any $x,y in C, x+y in C$.

Proof

Since C is cone, for $x,y in C Rightarrow lambda x in C$ and $mu y in C :forall :lambda, mu geq 0$

C is convex if $lambda x + left ( 1-lambda right )y in C : forall :lambda in left ( 0, 1 right )$

Since C is cone, $lambda x in C$ and $left ( 1-lambda right )y in C Leftrightarrow x,y in C$

Thus C is convex if $x+y in C$

In general, if $x_1,x_2 in C$, then, $lambda_1x_1+lambda_2x_2 in C, forall lambda_1,lambda_2 geq 0$

Examples

-

The conic combination of infinite set of vectors in $mathbb{R}^n$ is a convex cone.

-

Any empty set is a convex cone.

-

Any linear function is a convex cone.

-

Since a hyperplane is linear, it is also a convex cone.

-

Closed half spaces are also convex cones.

Note − The intersection of two convex cones is a convex cone but their union may or may not be a convex cone.

Convex Optimization – Polar Cone

Let S be a non empty set in $mathbb{R}^n$ Then, the polar cone of S denoted by $S^*$ is given by $S^*=left {p in mathbb{R}^n, p^Tx leq 0 : forall x in S right }$.

Remark

-

Polar cone is always convex even if S is not convex.

-

If S is empty set, $S^*=mathbb{R}^n$.

-

Polarity may be seen as a generalisation of orthogonality.

Let $Csubseteq mathbb{R}^n$ then the orthogonal space of C, denoted by $C^perp =left { y in mathbb{R}^n:left langle x,y right rangle=0 forall x in C right }$.

Lemma

Let $S,S_1$ and $S_2$ be non empty sets in $mathbb{R}^n$ then the following statements are true −

-

$S^*$ is a closed convex cone.

-

$S subseteq S^{**}$ where $S^{**}$ is a polar cone of $S^*$.

-

$S_1 subseteq S_2 Rightarrow S_{2}^{*} subseteq S_{1}^{*}$.

Proof

Step 1 − $S^*=left { p in mathbb{R}^n,p^Txleq 0 : forall 😡 in S right }$

-

Let $x_1,x_2 in S^*Rightarrow x_{1}^{T}xleq 0 $ and $x_{2}^{T}x leq 0,forall x in S$

For $lambda in left ( 0, 1 right ),left [ lambda x_1+left ( 1-lambda right )x_2 right ]^Tx=left [ left ( lambda x_1 right )^T+ left {left ( 1-lambda right )x_{2} right }^{T}right ]x, forall x in S$

$=left [ lambda x_{1}^{T} +left ( 1-lambda right )x_{2}^{T}right ]x=lambda x_{1}^{T}x+left ( 1-lambda right )x_{2}^{T}leq 0$

Thus $lambda x_1+left ( 1-lambda right )x_{2} in S^*$

Therefore $S^*$ is a convex set.

-

For $lambda geq 0,p^{T}x leq 0, forall 😡 in S$

Therefore, $lambda p^T x leq 0,$

$Rightarrow left ( lambda p right )^T x leq 0$

$Rightarrow lambda p in S^*$

Thus, $S^*$ is a cone.

-

To show $S^*$ is closed, i.e., to show if $p_n rightarrow p$ as $n rightarrow infty$, then $p in S^*$

$forall x in S, p_{n}^{T}x-p^T x=left ( p_n-p right )^T x$

As $p_n rightarrow p$ as $n rightarrow infty Rightarrow left ( p_n rightarrow p right )rightarrow 0$

Therefore $p_{n}^{T}x rightarrow p^{T}x$. But $p_{n}^{T}x leq 0, : forall x in S$

Thus, $p^Tx leq 0, forall x in S$

$Rightarrow p in S^*$

Hence, $S^*$ is closed.

Step 2 − $S^{**}=left { q in mathbb{R}^n:q^T p leq 0, forall p in S^*right }$

Let $x in S$, then $ forall p in S^*, p^T x leq 0 Rightarrow x^Tp leq 0 Rightarrow x in S^{**}$

Thus, $S subseteq S^{**}$

Step 3 − $S_2^*=left { p in mathbb{R}^n:p^Txleq 0, forall x in S_2 right }$

Since $S_1 subseteq S_2 Rightarrow forall x in S_2 Rightarrow forall x in S_1$

Therefore, if $hat{p} in S_2^*, $then $hat{p}^Tx leq 0,forall x in S_2$

$Rightarrow hat{p}^Txleq 0, forall x in S_1$

$Rightarrow hat{p}^T in S_1^*$

$Rightarrow S_2^* subseteq S_1^*$

Theorem

Let C be a non empty closed convex cone, then $C=C^**$

Proof

$C=C^{**}$ by previous lemma.

To prove : $x in C^{**} subseteq C$

Let $x in C^{**}$ and let $x notin C$

Then by fundamental separation theorem, there exists a vector $p neq 0$ and a scalar $alpha$ such that $p^Ty leq alpha, forall y in C$

Therefore, $p^Tx > alpha$

But since $left ( y=0 right ) in C$ and $p^Tyleq alpha, forall y in C Rightarrow alphageq 0$ and $p^Tx>0$

If $p notin C^*$, then there exists some $bar{y} in C$ such that $p^T bar{y}>0$ and $p^Tleft ( lambda bar{y} right )$ can be made arbitrarily large by taking $lambda$ sufficiently large.

This contradicts with the fact that $p^Ty leq alpha, forall y in C$

Therefore,$p in C^*$

Since $x in C^*=left { q:q^Tpleq 0, forall p in C^* right }$

Therefore, $x^Tp leq 0 Rightarrow p^Tx leq 0$

But $p^Tx> alpha$

Thus is contardiction.

Thus, $x in C$

Hence $C=C^{**}$.

Convex Optimization – Conic Combination

A point of the form $alpha_1x_1+alpha_2x_2+….+alpha_nx_n$ with $alpha_1, alpha_2,…,alpha_ngeq 0$ is called conic combination of $x_1, x_2,…,x_n.$

-

If $x_i$ are in convex cone C, then every conic combination of $x_i$ is also in C.

-

A set C is a convex cone if it contains all the conic combination of its elements.

Conic Hull

A conic hull is defined as a set of all conic combinations of a given set S and is denoted by coni(S).

Thus, $conileft ( S right )=left { displaystylesumlimits_{i=1}^k lambda_ix_i:x_i in S,lambda_iin mathbb{R}, lambda_igeq 0,i=1,2,…right }$

- The conic hull is a convex set.

- The origin always belong to the conic hull.

Convex Optimization – Polyhedral Set

A set in $mathbb{R}^n$ is said to be polyhedral if it is the intersection of a finite number of closed half spaces, i.e.,

$S=left { x in mathbb{R}^n:p_{i}^{T}xleq alpha_i, i=1,2,….,n right }$

For example,

-

$left { x in mathbb{R}^n:AX=b right }$

-

$left { x in mathbb{R}^n:AXleq b right }$

-

$left { x in mathbb{R}^n:AXgeq b right }$

Polyhedral Cone

A set in $mathbb{R}^n$ is said to be polyhedral cone if it is the intersection of a finite number of half spaces that contain the origin, i.e., $S=left { x in mathbb{R}^n:p_{i}^{T}xleq 0, i=1, 2,… right }$

Polytope

A polytope is a polyhedral set which is bounded.

Remarks

- A polytope is a convex hull of a finite set of points.

- A polyhedral cone is generated by a finite set of vectors.

- A polyhedral set is a closed set.

- A polyhedral set is a convex set.

Extreme point of a convex set

Let S be a convex set in $mathbb{R}^n$. A vector $x in S$ is said to be a extreme point of S if $x= lambda x_1+left ( 1-lambda right )x_2$ with $x_1, x_2 in S$ and $lambda inleft ( 0, 1 right )Rightarrow x=x_1=x_2$.

Example

Step 1 − $S=left { left ( x_1,x_2 right ) in mathbb{R}^2:x_{1}^{2}+x_{2}^{2}leq 1 right }$

Extreme point, $E=left { left ( x_1, x_2 right )in mathbb{R}^2:x_{1}^{2}+x_{2}^{2}= 1 right }$

Step 2 − $S=left { left ( x_1,x_2 right )in mathbb{R}^2:x_1+x_2

Extreme point, $E=left { left ( 0, 0 right), left ( 2, 0 right), left ( 0, 1 right), left ( frac{2}{3}, frac{4}{3} right) right }$

Step 3 − S is the polytope made by the points $left { left ( 0,0 right ), left ( 1,1 right ), left ( 1,3 right ), left ( -2,4 right ),left ( 0,2 right ) right }$

Extreme point, $E=left { left ( 0,0 right ), left ( 1,1 right ),left ( 1,3 right ),left ( -2,4 right ) right }$

Remarks

-

Any point of the convex set S, can be represented as a convex combination of its extreme points.

-

It is only true for closed and bounded sets in $mathbb{R}^n$.

-

It may not be true for unbounded sets.

k extreme points

A point in a convex set is called k extreme if and only if it is the interior point of a k-dimensional convex set within S, and it is not an interior point of a (k+1)- dimensional convex set within S. Basically, for a convex set S, k extreme points make k-dimensional open faces.

Convex Optimization – Direction

Let S be a closed convex set in $mathbb{R}^n$. A non zero vector $d in mathbb{R}^n$ is called a direction of S if for each $x in S,x+lambda d in S, forall lambda geq 0.$

-

Two directions $d_1$ and $d_2$ of S are called distinct if $d neq alpha d_2$ for $ alpha>0$.

-

A direction $d$ of $S$ is said to be extreme direction if it cannot be written as a positive linear combination of two distinct directions, i.e., if $d=lambda _1d_1+lambda _2d_2$ for $lambda _1, lambda _2>0$, then $d_1= alpha d_2$ for some $alpha$.

-

Any other direction can be expressed as a positive combination of extreme directions.

-

For a convex set $S$, the direction d such that $x+lambda d in S$ for some $x in S$ and all $lambda geq0$ is called recessive for $S$.

-

Let E be the set of the points where a certain function $f:S rightarrow$ over a non-empty convex set S in $mathbb{R}^n$ attains its maximum, then $E$ is called exposed face of $S$. The directions of exposed faces are called exposed directions.

-

A ray whose direction is an extreme direction is called an extreme ray.

Example

Consider the function $fleft ( x right )=y=left |x right |$, where $x in mathbb{R}^n$. Let d be unit vector in $mathbb{R}^n$

Then, d is the direction for the function f because for any $lambda geq 0, x+lambda d in fleft ( x right )$.

Convex and Concave Function

Let $f:S rightarrow mathbb{R}$, where S is non empty convex set in $mathbb{R}^n$, then $fleft ( x right )$ is said to be convex on S if $fleft ( lambda x_1+left ( 1-lambda right )x_2 right )leq lambda fleft ( x_1 right )+left ( 1-lambda right )fleft ( x_2 right ), forall lambda in left ( 0,1 right )$.

On the other hand, Let $f:Srightarrow mathbb{R}$, where S is non empty convex set in $mathbb{R}^n$, then $fleft ( x right )$ is said to be concave on S if $fleft ( lambda x_1+left ( 1-lambda right )x_2 right )geq lambda fleft ( x_1 right )+left ( 1-lambda right )fleft ( x_2 right ), forall lambda in left ( 0, 1 right )$.

Let $f:S rightarrow mathbb{R}$ where S is non empty convex set in $mathbb{R}^n$, then $fleft ( xright )$ is said to be strictly convex on S if $fleft ( lambda x_1+left ( 1-lambda right )x_2 right )

Let $f:S rightarrow mathbb{R}$ where S is non empty convex set in $mathbb{R}^n$, then $fleft ( xright )$ is said to be strictly concave on S if $fleft ( lambda x_1+left ( 1-lambda right )x_2 right )> lambda fleft ( x_1 right )+left ( 1-lambda right )fleft ( x_2 right ), forall lambda in left ( 0, 1 right )$.

Examples

-

A linear function is both convex and concave.

-

$fleft ( x right )=left | x right |$ is a convex function.

-

$fleft ( x right )= frac{1}{x}$ is a convex function.

Theorem

Let $f_1,f_2,…,f_k:mathbb{R}^n rightarrow mathbb{R}$ be convex functions. Consider the function $fleft ( x right )=displaystylesumlimits_{j=1}^k alpha_jf_jleft ( x right )$ where $alpha_j>0,j=1, 2, …k,$ then $fleft ( x right )$is a convex function.

Proof

Since $f_1,f_2,…f_k$ are convex functions

Therefore, $f_ileft ( lambda x_1+left ( 1-lambda right )x_2 right )leq lambda f_ileft ( x_1 right )+left ( 1-lambda right )f_ileft ( x_2 right ), forall lambda in left ( 0, 1 right )$ and $i=1, 2,….,k$

Consider the function $fleft ( x right )$.

Therefore,

$ fleft ( lambda x_1+left ( 1-lambda right )x_2 right )$

$=displaystylesumlimits_{j=1}^k alpha_jf_jleft ( lambda x_1 +1-lambda right )x_2leq displaystylesumlimits_{j=1}^kalpha_jlambda f_jleft ( x_1 right )+left ( 1-lambda right )f_jleft ( x_2 right )$

$Rightarrow fleft ( lambda x_1+left ( 1-lambda right )x_2 right )leq lambda left ( displaystylesumlimits_{j=1}^k alpha _jf_jleft ( x_1 right ) right )+left ( displaystylesumlimits_{j=1}^k alpha _jf_jleft ( x_2 right ) right )$

$Rightarrow fleft ( lambda x_1+left ( 1-lambda right )x_2 right )leq lambda fleft ( x_2 right )leq left ( 1-lambda right )fleft ( x_2 right )$

Hence, $fleft ( xright )$ is a convex function.

Theorem

Let $fleft ( xright )$ be a convex function on a convex set $Ssubset mathbb{R}^n$ then a local minima of $fleft ( xright )$ on S is a global minima.

Proof

Let $hat{x}$ be a local minima for $fleft ( x right )$ and $hat{x}$ is not global minima.

therefore, $exists hat{x} in S$ such that $fleft ( bar{x} right )

Since $hat{x}$ is a local minima, there exists neighbourhood $N_varepsilon left ( hat{x} right )$ such that $fleft ( hat{x} right )leq fleft ( x right ), forall x in N_varepsilon left ( hat{x} right )cap S$

But $fleft ( x right )$ is a convex function on S, therefore for $lambda in left ( 0, 1 right )$

we have $lambda hat{x}+left ( 1-lambda right )bar{x}leq lambda fleft ( hat{x} right )+left ( 1-lambda right )fleft ( bar{x} right )$

$Rightarrow lambda hat{x}+left ( 1-lambda right )bar{x}

$Rightarrow lambda hat{x}+left ( 1-lambda right )bar{x}

But for some $lambda

$lambda hat{x}+left ( 1-lambda right )bar{x} in N_varepsilon left ( hat{x} right )cap S$ and $fleft ( lambda hat{x}+left ( 1-lambda right )bar{x} right )

which is a contradiction.

Hence, $bar{x}$ is a global minima.

Epigraph

let S be a non-empty subset in $mathbb{R}^n$ and let $f:S rightarrow mathbb{R}$ then the epigraph of f denoted by epi(f) or $E_f$ is a subset of $mathbb{R}^n+1$ defined by $E_f=left { left ( x,alpha right ):x in mathbb{R}^n, alpha in mathbb{R}, fleft ( x right )leq alpha right }$

Hypograph

let S be a non-empty subset in $mathbb{R}^n$ and let $f:S rightarrow mathbb{R}$, then the hypograph of f denoted by hyp(f) or $H_f=left { left ( x, alpha right ):x in mathbb{R}^n, alpha in mathbb{R}^n, alpha in mathbb{R}, fleft ( x right )geq alpha right }$

Theorem

Let S be a non-empty convex set in $mathbb{R}^n$ and let $f:S rightarrow mathbb{R}^n$, then f is convex if and only if its epigraph $E_f$ is a convex set.

Proof

Let f is a convex function.

To show $E_f$ is a convex set.

Let $left ( x_1, alpha_1 right ),left ( x_2, alpha_2 right ) in E_f,lambda inleft ( 0, 1 right )$

To show $lambda left ( x_1,alpha_1 right )+left ( 1-lambda right )left ( x_2, alpha_2 right ) in E_f$

$Rightarrow left [ lambda x_1+left ( 1-lambda right )x_2, lambda alpha_1+left ( 1-lambda right )alpha_2 right ]in E_f$

$fleft ( x_1 right )leq alpha _1, fleft ( x_2right )leq alpha _2$

Therefore, $fleft (lambda x_1+left ( 1-lambda right )x_2 right )leq lambda fleft ( x_1 right )+left ( 1-lambda right )f left ( x_2 right )$

$Rightarrow fleft ( lambda x_1+left ( 1-lambda right )x_2 right )leq lambda alpha_1+left ( 1-lambda right )alpha_2$

Converse

Let $E_f$ is a convex set.

To show f is convex.

i.e., to show if $x_1, x_2 in S,lambda left ( 0, 1right )$

$fleft ( lambda x_1+left ( 1-lambda right )x_2 right )leq lambda fleft ( x_1 right )+left ( 1-lambda right )fleft ( x_2 right )$

Let $x_1,x_2 in S, lambda in left ( 0, 1 right ),fleft ( x_1 right ), fleft ( x_2 right ) in mathbb{R}$

Since $E_f$ is a convex set, $left ( lambda x_1+left ( 1-lambda right )x_2, lambda fleft ( x_1 right )+left ( 1-lambda right )right )fleft ( x_2 right )in E_f$

Therefore, $fleft ( lambda x_1+left ( 1-lambda right )x_2 right )leq lambda fleft ( x_1 right )+left ( 1-lambda right )fleft ( x_2 right )$

Convex Optimization – Jensen”s Inequality

Let S be a non-empty convex set in $mathbb{R}^n$ and $f:S rightarrow mathbb{R}^n$. Then f is convex if and only if for each integer $k>0$

$x_1,x_2,…x_k in S, displaystylesumlimits_{i=1}^k lambda_i=1, lambda_igeq 0, forall i=1,2,s,k$, we have $fleft ( displaystylesumlimits_{i=1}^k lambda_ix_i right )leq displaystylesumlimits_{i=1}^k lambda _ifleft ( x right )$

Proof

By induction on k.

$k=1:x_1 in S$ Therefore $fleft ( lambda_1 x_1right ) leq lambda_i fleft (x_1right )$ because $lambda_i=1$.

$k=2:lambda_1+lambda_2=1$ and $x_1, x_2 in S$

Therefore, $lambda_1x_1+lambda_2x_2 in S$

Hence by definition, $fleft ( lambda_1 x_1 +lambda_2 x_2 right )leq lambda _1fleft ( x_1 right )+lambda _2fleft ( x_2 right )$

Let the statement is true for $n

Therefore,

$fleft ( lambda_1 x_1+ lambda_2 x_2+….+lambda_k x_kright )leq lambda_1 fleft (x_1 right )+lambda_2 fleft (x_2 right )+…+lambda_k fleft (x_k right )$

$k=n+1:$ Let $x_1, x_2,….x_n,x_{n+1} in S$ and $displaystylesumlimits_{i=1}^{n+1}=1$

Therefore $mu_1x_1+mu_2x_2+…….+mu_nx_n+mu_{n+1} x_{n+1} in S$

thus,$fleft (mu_1x_1+mu_2x_2+…+mu_nx_n+mu_{n+1} x_{n+1} right )$

$=fleft ( left ( mu_1+mu_2+…+mu_n right)frac{mu_1x_1+mu_2x_2+…+mu_nx_n}{mu_1+mu_2+mu_3}+mu_{n+1}x_{n+1} right)$

$=fleft ( mu_y+mu_{n+1}x_{n+1} right )$ where $mu=mu_1+mu_2+…+mu_n$ and

$y=frac{mu_1x_1+mu_2x_2+…+mu_nx_n}{mu_1+mu_2+…+mu_n}$ and also $mu_1+mu_{n+1}=1,y in S$

$Rightarrow fleft ( mu_1x_1+mu_2x_2+…+mu_nx_n+mu_{n+1}x_{n+1}right ) leq mu fleft ( y right )+mu_{n+1} fleft ( x_{n+1} right )$

$Rightarrow fleft ( mu_1x_1+mu_2x_2+…+mu_nx_n+mu_{n+1}x_{n+1}right ) leq$

$left ( mu_1+mu_2+…+mu_n right )fleft ( frac{mu_1x_1+mu_2x_2+…+mu_nx_n}{mu_1+mu_2+…+mu_n} right )+mu_{n+1}fleft ( x_{n+1} right )$

$Rightarrow fleft ( mu_1x_1+mu_2x_2+…+mu_nx_n +mu_{n+1}x_{n+1}right )leq left ( mu_1+ mu_2+ …+mu_n right )$

$left [ frac{mu_1}{mu_1+ mu_2+ …+mu_n}fleft ( x_1 right )+…+frac{mu_n}{mu_1+ mu_2+ …+mu_n}fleft ( x_n right ) right ]+mu_{n+1}fleft ( x_{n+1} right )$

$Rightarrow fleft ( mu_1x_1+mu_2x_2+…+mu_nx_n+mu_{n+1}x_{n+1}right )leq mu_1fleft ( x_1 right )+mu_2fleft ( x_2 right )+….$

Hence Proved.

Convex Optimization – Differentiable Function

Let S be a non-empty open set in $mathbb{R}^n$,then $f:Srightarrow mathbb{R}$ is said to be differentiable at $hat{x} in S$ if there exist a vector $bigtriangledown fleft ( hat{x} right )$ called gradient vector and a function $alpha :mathbb{R}^nrightarrow mathbb{R}$ such that

$fleft ( x right )=fleft ( hat{x} right )+bigtriangledown fleft ( hat{x} right )^Tleft ( x-hat{x} right )+left | x=hat{x} right |alpha left ( hat{x}, x-hat{x} right ), forall x in S$ where

$alpha left (hat{x}, x-hat{x} right )rightarrow 0 bigtriangledown fleft ( hat{x} right )=left [ frac{partial f}{partial x_1}frac{partial f}{partial x_2}…frac{partial f}{partial x_n} right ]_{x=hat{x}}^{T}$

Theorem

let S be a non-empty, open convexset in $mathbb{R}^n$ and let $f:Srightarrow mathbb{R}$ be differentiable on S. Then, f is convex if and only if for $x_1,x_2 in S, bigtriangledown fleft ( x_2 right )^T left ( x_1-x_2 right ) leq fleft ( x_1 right )-fleft ( x_2 right )$

Proof

Let f be a convex function. i.e., for $x_1,x_2 in S, lambda in left ( 0, 1 right )$

$fleft [ lambda x_1+left ( 1-lambda right )x_2 right ]leq lambda fleft ( x_1 right )+left ( 1-lambda right )fleft ( x_2 right )$

$ Rightarrow fleft [ lambda x_1+left ( 1-lambda right )x_2 right ]leq lambda left ( fleft ( x_1 right )-fleft ( x_2 right ) right )+fleft ( x_2 right )$

$ Rightarrowlambda left ( fleft ( x_1 right )-fleft ( x_2 right ) right )geq fleft ( x_2+lambda left ( x_1-x_2 right ) right )-fleft ( x_2 right )$

$Rightarrow lambda left ( fleft ( x_1 right )-fleft ( x_2 right ) right )geq fleft ( x_2 right )+bigtriangledown fleft ( x_2 right )^Tleft ( x_1-x_2 right )lambda +$

$left | lambda left ( x_1-x_2 right ) right |alpha left ( x_2,lambdaleft (x_1 – x_2 right )-fleft ( x_2 right ) right )$

where $alphaleft ( x_2, lambdaleft (x_1 – x_2 right ) right )rightarrow 0$ as$lambda rightarrow 0$

Dividing by $lambda$ on both sides, we get −

$fleft ( x_1 right )-fleft ( x_2 right ) geq bigtriangledown fleft ( x_2 right )^T left ( x_1-x_2 right )$

Converse

Let for $x_1,x_2 in S, bigtriangledown fleft ( x_2 right )^T left ( x_1-x_2 right ) leq fleft ( x_1 right )-f left ( x_2 right )$

To show that f is convex.

Since S is convex, $x_3=lambda x_1+left (1-lambda right )x_2 in S, lambda in left ( 0, 1 right )$

Since $x_1, x_3 in S$, therefore

$fleft ( x_1 right )-f left ( x_3 right ) geq bigtriangledown fleft ( x_3 right )^T left ( x_1 -x_3right )$

$ Rightarrow fleft ( x_1 right )-f left ( x_3 right )geq bigtriangledown fleft ( x_3 right )^T left ( x_1 – lambda x_1-left (1-lambda right )x_2right )$

$ Rightarrow fleft ( x_1 right )-f left ( x_3 right )geq left ( 1- lambdaright )bigtriangledown fleft ( x_3 right )^T left ( x_1 – x_2right )$

Since, $x_2, x_3 in S$ therefore

$fleft ( x_2 right )-fleft ( x_3 right )geq bigtriangledown fleft ( x_3 right )^Tleft ( x_2-x_3 right )$

$Rightarrow fleft ( x_2 right )-fleft ( x_3 right )geq bigtriangledown fleft ( x_3 right )^Tleft ( x_2-lambda x_1-left ( 1-lambda right )x_2 right )$

$Rightarrow fleft ( x_2 right )-fleft ( x_3 right )geq left ( -lambda right )bigtriangledown fleft ( x_3 right )^Tleft ( x_1-x_2 right )$

Thus, combining the above equations, we get −

$lambda left ( fleft ( x_1 right )-fleft ( x_3 right ) right )+left ( 1- lambda right )left ( fleft ( x_2 right )-fleft ( x_3 right ) right )geq 0$

$Rightarrow fleft ( x_3right )leq lambda fleft ( x_1 right )+left ( 1-lambda right )fleft ( x_2 right )$

Theorem

let S be a non-empty open convex set in $mathbb{R}^n$ and let $f:S rightarrow mathbb{R}$ be differentiable on S, then f is convex on S if and only if for any $x_1,x_2 in S,left ( bigtriangledown f left ( x_2 right )-bigtriangledown f left ( x_1 right ) right )^T left ( x_2-x_1 right ) geq 0$

Proof

let f be a convex function, then using the previous theorem −

$bigtriangledown fleft ( x_2 right )^Tleft ( x_1-x_2 right )leq fleft ( x_1 right )-fleft ( x_2 right )$ and

$bigtriangledown fleft ( x_1 right )^Tleft ( x_2-x_1 right )leq fleft ( x_2 right )-fleft ( x_1 right )$

Adding the above two equations, we get −

$bigtriangledown fleft ( x_2 right )^Tleft ( x_1-x_2 right )+bigtriangledown fleft ( x_1 right )^Tleft ( x_2-x_1 right )leq 0$

$Rightarrow left ( bigtriangledown fleft ( x_2 right )-bigtriangledown fleft ( x_1 right ) right )^Tleft ( x_1-x_2 right )leq 0$

$Rightarrow left ( bigtriangledown fleft ( x_2 right )-bigtriangledown fleft ( x_1 right ) right )^Tleft ( x_2-x_1 right )geq 0$

Converse

Let for any $x_1,x_2 in S,left (bigtriangledown f left ( x_2right )- bigtriangledown f left ( x_1right )right )^T left ( x_2-x_1right )geq 0$

To show that f is convex.

Let $x_1,x_2 in S$, thus by mean value theorem, $frac{fleft ( x_1right )-fleft ( x_2right )}{x_1-x_2}=bigtriangledown fleft ( xright ),x in left ( x_1-x_2right ) Rightarrow x= lambda x_1+left ( 1-lambdaright )x_2$ because S is a convex set.

$Rightarrow fleft ( x_1 right )- fleft ( x_2 right )=left ( bigtriangledown fleft ( x right )^T right )left ( x_1-x_2 right )$

for $x,x_1$, we know −

$left ( bigtriangledown fleft ( x right )-bigtriangledown fleft ( x_1 right ) right )^Tleft ( x-x_1 right )geq 0$

$Rightarrow left ( bigtriangledown fleft ( x right )-bigtriangledown fleft ( x_1 right ) right )^Tleft ( lambda x_1+left ( 1-lambda right )x_2-x_1 right )geq 0$

$Rightarrow left ( bigtriangledown fleft ( x right )- bigtriangledown fleft ( x_1 right )right )^Tleft ( 1- lambda right )left ( x_2-x_1 right )geq 0$

$Rightarrow bigtriangledown fleft ( x right )^Tleft ( x_2-x_1 right )geq bigtriangledown fleft ( x_1 right )^Tleft ( x_2-x_1 right )$

Combining the above equations, we get −

$Rightarrow bigtriangledown fleft ( x_1 right )^Tleft ( x_2-x_1 right )leq fleft ( x_2 right )-fleft ( x_1 right )$

Hence using the last theorem, f is a convex function.

Twice Differentiable function

Let S be a non-empty subset of $mathbb{R}^n$ and let $f:Srightarrow mathbb{R}$ then f is said to be twice differentiable at $bar{x} in S$ if there exists a vector $bigtriangledown fleft (bar{x}right ), a :nXn$ matrix $Hleft (xright )$(called Hessian matrix) and a function $alpha:mathbb{R}^n rightarrow mathbb{R}$ such that $fleft ( x right )=fleft ( bar{x}+x-bar{x} right )=fleft ( bar{x} right )+bigtriangledown fleft ( bar{x} right )^Tleft ( x-bar{x} right )+frac{1}{2}left ( x-bar{x} right )Hleft ( bar{x} right )left ( x-bar{x} right )$

where $ alpha left ( bar{x}, x-bar{x} right )rightarrow Oasxrightarrow bar{x}$

Sufficient & Necessary Conditions for Global Optima

Theorem

Let f be twice differentiable function. If $bar{x}$ is a local minima, then $bigtriangledown fleft ( bar{x} right )=0$ and the Hessian matrix $Hleft ( bar{x} right )$ is a positive semidefinite.

Proof

Let $d in mathbb{R}^n$. Since f is twice differentiable at $bar{x}$.

Therefore,

$fleft ( bar{x} +lambda dright )=fleft ( bar{x} right )+lambda bigtriangledown fleft ( bar{x} right )^T d+lambda^2d^THleft ( bar{x} right )d+lambda^2d^THleft ( bar{x} right )d+$

$lambda^2left | d right |^2beta left ( bar{x}, lambda d right )$

But $bigtriangledown fleft ( bar{x} right )=0$ and $betaleft ( bar{x}, lambda d right )rightarrow 0$ as $lambda rightarrow 0$

$Rightarrow fleft ( bar{x} +lambda d right )-fleft ( bar{x} right )=lambda ^2d^THleft ( bar{x} right )d$

Since $bar{x }$ is a local minima, there exists a $delta > 0$ such that $fleft ( x right )leq fleft ( bar{x}+lambda d right ), forall lambda in left ( 0,delta right )$

Theorem

Let $f:S rightarrow mathbb{R}^n$ where $S subset mathbb{R}^n$ be twice differentiable over S. If $bigtriangledown fleft ( xright )=0$ and $Hleft ( bar{x} right )$ is positive semi-definite, for all $x in S$, then $bar{x}$ is a global optimal solution.

Proof

Since $Hleft ( bar{x} right )$ is positive semi-definite, f is convex function over S. Since f is differentiable and convex at $bar{x}$

$bigtriangledown fleft ( bar{x} right )^T left ( x-bar{x} right ) leq fleft (xright )-fleft (bar{x}right ),forall x in S$

Since $bigtriangledown fleft ( bar{x} right )=0, fleft ( x right )geq fleft ( bar{x} right )$

Hence, $bar{x}$ is a global optima.

Theorem

Suppose $bar{x} in S$ is a local optimal solution to the problem $f:S rightarrow mathbb{R}$ where S is a non-empty subset of $mathbb{R}^n$ and S is convex. $min :fleft ( x right )$ where $x in S$.

Then:

-

$bar{x}$ is a global optimal solution.

-

If either $bar{x}$ is strictly local minima or f is strictly convex function, then $bar{x}$ is the unique global optimal solution and is also strong local minima.

Proof

Let $bar{x}$ be another global optimal solution to the problem such that $x neq bar{x}$ and $fleft ( bar{x} right )=fleft ( hat{x} right )$

Since $hat{x},bar{x} in S$ and S is convex, then $frac{hat{x}+bar{x}}{2} in S$ and f is strictly convex.

$Rightarrow fleft ( frac{hat{x}+bar{x}}{2} right )

This is contradiction.

Hence, $hat{x}$ is a unique global optimal solution.

Corollary

Let $f:S subset mathbb{R}^n rightarrow mathbb{R}$ be a differentiable convex function where $phi neq Ssubset mathbb{R}^n$ is a convex set. Consider the problem $min fleft (xright ),x in S$,then $bar{x}$ is an optimal solution if $bigtriangledown fleft (bar{x}right )^Tleft (x-bar{x}right ) geq 0,forall x in S.$

Proof

Let $bar{x}$ is an optimal solution, i.e, $fleft (bar{x}right )leq fleft (xright ),forall x in S$

$Rightarrow fleft (xright )=fleft (bar{x}right )geq 0$

$fleft (xright )=fleft (bar{x}right )+bigtriangledown fleft (bar{x}right )^Tleft (x-bar{x}right )+left | x-bar{x} right |alpha left ( bar{x},x-bar{x} right )$

where $alpha left ( bar{x},x-bar{x} right )rightarrow 0$ as $x rightarrow bar{x}$

$Rightarrow fleft (xright )-fleft (bar{x}right )=bigtriangledown fleft (bar{x}right )^Tleft (x-bar{x}right )geq 0$

Corollary

Let f be a differentiable convex function at $bar{x}$,then $bar{x}$ is global minimum iff $bigtriangledown fleft (bar{x}right )=0$

Examples

-

$fleft (xright )=left (x^2-1right )^{3}, x in mathbb{R}$.

$bigtriangledown fleft (xright )=0 Rightarrow x= -1,0,1$.

$bigtriangledown^2fleft (pm 1 right )=0, bigtriangledown^2 fleft (0 right )=6>0$.

$fleft (pm 1 right )=0,fleft (0 right )=-1$

Hence, $fleft (x right ) geq -1=fleft (0 right )Rightarrow fleft (0 right ) leq f left (x right)forall x in mathbb{R}$

-

$fleft (x right )=xlog x$ defined on $S=left { x in mathbb{R}, x> 0 right }$.

${f}”x=1+log x$

${f}””x=frac{1}{x}>0$

Thus, this function is strictly convex.

-

$f left (x right )=e^{x},x in mathbb{R}$ is strictly convex.

Quasiconvex and Quasiconcave functions

Let $f:S rightarrow mathbb{R}$ where $S subset mathbb{R}^n$ is a non-empty convex set. The function f is said to be quasiconvex if for each $x_1,x_2 in S$, we have $fleft ( lambda x_1+left ( 1-lambda right )x_2 right )leq maxleft { fleft ( x_1 right ),fleft ( x_2 right ) right },lambda in left ( 0, 1 right )$

For example, $fleft ( x right )=x^{3}$

Let $f:Srightarrow R $ where $Ssubset mathbb{R}^n$ is a non-empty convex set. The function f is said to be quasiconvex if for each $x_1, x_2 in S$, we have $fleft ( lambda x_1+left ( 1-lambda right )x_2 right )geq minleft { fleft ( x_1 right ),fleft ( x_2 right ) right }, lambda in left ( 0, 1 right )$

Remarks

- Every convex function is quasiconvex but the converse is not true.

- A function which is both quasiconvex and quasiconcave is called quasimonotone.

Theorem

Let $f:Srightarrow mathbb{R}$ and S is a non empty convex set in $mathbb{R}^n$. The function f is quasiconvex if and only if $S_{alpha} =left ( x in S:fleft ( x right )leq alpha right }$ is convex for each real number alpha$

Proof

Let f is quasiconvex on S.

Let $x_1,x_2 in S_{alpha}$ therefore $x_1,x_2 in S$ and $max left { fleft ( x_1 right ),fleft ( x_2 right ) right }leq alpha$

Let $lambda in left (0, 1 right )$ and let $x=lambda x_1+left ( 1-lambda right )x_2leq max left { fleft ( x_1 right ),fleft ( x_2 right ) right }Rightarrow x in S$

Thus, $fleft ( lambda x_1+left ( 1-lambda right )x_2 right )leq maxleft { fleft ( x_1 right ), fleft ( x_2 right ) right }leq alpha$

Therefore, $S_{alpha}$ is convex.

Converse

Let $S_{alpha}$ is convex for each $alpha$

$x_1,x_2 in S, lambda in left ( 0,1right )$

$x=lambda x_1+left ( 1-lambda right )x_2$

Let $x=lambda x_1+left ( 1-lambda right )x_2$

For $x_1, x_2 in S_{alpha}, alpha= max left { fleft ( x_1 right ), fleft ( x_2 right ) right }$

$Rightarrow lambda x_1+left (1-lambda right )x_2 in S_{alpha}$

$Rightarrow f left (lambda x_1+left (1-lambda right )x_2 right )leq alpha$

Hence proved.

Theorem

Let $f:Srightarrow mathbb{R}$ and S is a non empty convex set in $mathbb{R}^n$. The function f is quasiconcave if and only if $S_{alpha} =left { x in S:fleft ( x right )geq alpha right }$ is convex for each real number $alpha$.

Theorem

Let $f:Srightarrow mathbb{R}$ and S is a non empty convex set in $mathbb{R}^n$. The function f is quasimonotone if and only if $S_{alpha} =left { x in S:fleft ( x right )= alpha right }$ is convex for each real number $alpha$.

Differentiable Quasiconvex Function

Theorem

Let S be a non empty convex set in $mathbb{R}^n$ and $f:S rightarrow mathbb{R}$ be differentiable on S, then f is quasiconvex if and only if for any $x_1,x_2 in S$ and $fleft ( x_1 right )leq fleft ( x_2 right )$, we have $bigtriangledown fleft ( x_2 right )^Tleft ( x_2-x_1 right )leq 0$

Proof

Let f be a quasiconvex function.

Let $x_1,x_2 in S$ such that $fleft ( x_1 right ) leq fleft ( x_2 right )$

By differentiability of f at $x_2, lambda in left ( 0, 1 right )$

$fleft ( lambda x_1+left ( 1-lambda right )x_2 right )=fleft ( x_2+lambda left (x_1-x_2 right ) right )=fleft ( x_2 right )+bigtriangledown fleft ( x_2 right )^Tleft ( x_1-x_2 right )$

$+lambda left | x_1-x_2 right |alpha left ( x_2,lambda left ( x_1-x_2 right ) right )$

$Rightarrow fleft ( lambda x_1+left ( 1-lambda right )x_2 right )-fleft ( x_2 right )-fleft ( x_2 right )=bigtriangledown fleft ( x_2 right )^Tleft ( x_1-x_2 right )$

$+lambda left | x_1-x_2 right |alpha left ( x2, lambdaleft ( x_1-x_2 right )right )$

But since f is quasiconvex, $f left ( lambda x_1+ left ( 1- lambda right )x_2 right )leq f left (x_2 right )$

$bigtriangledown fleft ( x_2 right )^Tleft ( x_1-x_2 right )+lambda left | x_1-x_2 right |alpha left ( x_2,lambda left ( x_1,x_2 right ) right )leq 0$

But $alpha left ( x_2,lambda left ( x_1,x_2 right )right )rightarrow 0$ as $lambda rightarrow 0$

Therefore, $bigtriangledown fleft ( x_2 right )^Tleft ( x_1-x_2 right ) leq 0$

Converse

let for $x_1,x_2 in S$ and $fleft ( x_1 right )leq fleft ( x_2 right )$, $bigtriangledown fleft ( x_2 right )^T left ( x_1,x_2 right ) leq 0$

To show that f is quasiconvex,ie, $fleft ( lambda x_1+left ( 1-lambda right )x_2 right )leq fleft ( x_2 right )$

Proof by contradiction

Suppose there exists an $x_3= lambda x_1+left ( 1-lambda right )x_2$ such that $fleft ( x_2 right )

For $x_2$ and $x_3,bigtriangledown fleft ( x_3 right )^T left ( x_2-x_3 right ) leq 0$

$Rightarrow -lambda bigtriangledown fleft ( x_3 right )^Tleft ( x_2-x_3 right )leq 0$

$Rightarrow bigtriangledown fleft ( x_3 right )^T left ( x_1-x_2 right )geq 0$

For $x_1$ and $x_3,bigtriangledown fleft ( x_3 right )^T left ( x_1-x_3 right ) leq 0$

$Rightarrow left ( 1- lambda right )bigtriangledown fleft ( x_3 right )^Tleft ( x_1-x_2 right )leq 0$

$Rightarrow bigtriangledown fleft ( x_3 right )^T left ( x_1-x_2 right )leq 0$

thus, from the above equations, $bigtriangledown fleft ( x_3 right )^T left ( x_1-x_2 right )=0$

Define $U=left { x:fleft ( x right )leq fleft ( x_2 right ),x=mu x_2+left ( 1-mu right )x_3, mu in left ( 0,1 right ) right }$

Thus we can find $x_0 in U$ such that $x_0 = mu_0 x_2= mu x_2+left ( 1- mu right )x_3$ for some $mu _0 in left ( 0,1 right )$ which is nearest to $x_3$ and $hat{x} in left ( x_0,x_1 right )$ such that by mean value theorem,

$$frac{fleft ( x_3right )-fleft ( x_0right )}{x_3-x_0}= bigtriangledown fleft ( hat{x}right )$$

$$Rightarrow fleft ( x_3 right )=fleft ( x_0 right )+bigtriangledown fleft ( hat{x} right )^Tleft ( x_3-x_0 right )$$

$$Rightarrow fleft ( x_3 right )=fleft ( x_0 right )+mu_0 lambda fleft ( hat{x}right )^T left ( x_1-x_2 right )$$

Since $x_0$ is a combination of $x_1$ and $x_2$ and $fleft (x_2 right )

By repeating the starting procedure, $bigtriangledown f left ( hat{x}right )^T left ( x_1-x_2right )=0$

Thus, combining the above equations, we get:

$$fleft ( x_3right )=fleft ( x_0 right ) leq fleft ( x_2right )$$

$$Rightarrow fleft ( x_3right )leq fleft ( x_2right )$$

Hence, it is contradiction.

Examples

Step 1 − $fleft ( xright )=X^3$

$Let f left ( x_1right )leq fleft ( x_2right )$

$Rightarrow x_{1}^{3}leq x_{2}^{3}Rightarrow x_1leq x_2$

$bigtriangledown fleft ( x_2 right )left ( x_1-x_2 right )=3x_{2}^{2}left ( x_1-x_2 right )leq 0$

Thus, $fleft ( xright )$ is quasiconvex.

Step 2 − $fleft ( xright )=x_{1}^{3}+x_{2}^{3}$

Let $hat{x_1}=left ( 2, -2right )$ and $hat{x_2}=left ( 1, 0right )$

thus, $fleft ( hat{x_1}right )=0,fleft ( hat{x_2}right )=1 Rightarrow fleft ( hat{x_1}right )setminus

Thus, $bigtriangledown f left ( hat{x_2}right )^T left ( hat{x_1}- hat{x_2}right )= left ( 3, 0right )^T left ( 1, -2right )=3 >0$

Hence $fleft ( xright )$ is not quasiconvex.

Strictly Quasiconvex Function

Let $f:Srightarrow mathbb{R}^n$ and S be a non-empty convex set in $mathbb{R}^n$ then f is said to be strictly quasicovex function if for each $x_1,x_2 in S$ with $fleft ( x_1 right ) neq fleft ( x_2 right )$, we have $fleft ( lambda x_1+left ( 1-lambda right )x_2 right )

Remarks

- Every strictly quasiconvex function is strictly convex.

- Strictly quasiconvex function does not imply quasiconvexity.

- Strictly quasiconvex function may not be strongly quasiconvex.

- Pseudoconvex function is a strictly quasiconvex function.

Theorem

Let $f:Srightarrow mathbb{R}^n$ be strictly quasiconvex function and S be a non-empty convex set in $mathbb{R}^n$.Consider the problem: $min :fleft ( x right ), x in S$. If $hat{x}$ is local optimal solution, then $bar{x}$ is global optimal solution.

Proof

Let there exists $ bar{x} in S$ such that $fleft ( bar{x}right )leq f left ( hat{x}right )$

Since $bar{x},hat{x} in S$ and S is convex set, therefore,

$$lambda bar{x}+left ( 1-lambda right )hat{x}in S, forall lambda in left ( 0,1 right )$$

Since $hat{x}$ is local minima, $fleft ( hat{x} right ) leq fleft ( lambda bar{x}+left ( 1-lambda right )hat{x} right ), forall lambda in left ( 0,delta right )$

Since f is strictly quasiconvex.

$$fleft ( lambda bar{x}+left ( 1-lambda right )hat{x} right )

Hence, it is contradiction.

Strictly quasiconcave function

Let $f:Srightarrow mathbb{R}^n$ and S be a non-empty convex set in $mathbb{R}^n$, then f is saud to be strictly quasicovex function if for each $x_1,x_2 in S$ with $fleft (x_1right )neq fleft (x_2right )$, we have

$$fleft (lambda x_1+left (1-lambdaright )x_2right )> min left { f left (x_1right ),fleft (x_2right )right }$$.

Examples

-

$fleft (xright )=x^2-2$

It is a strictly quasiconvex function because if we take any two points $x_1,x_2$ in the domain that satisfy the constraints in the definition $fleft (lambda x_1+left (1- lambdaright )x_2right )

-

$fleft (xright )=-x^2$

It is not a strictly quasiconvex function because if we take take $x_1=1$ and $x_2=-1$ and $lambda=0.5$, then $fleft (x_1right )=-1=fleft (x_2right )$ but $fleft (lambda x_1+left (1- lambdaright )x_2right )=0$ Therefore it does not satisfy the conditions stated in the definition. But it is a quasiconcave function because if we take any two points in the domain that satisfy the constraints in the definition $fleft ( lambda x_1+left (1-lambdaright )x_2right )> min left { f left (x_1right ),fleft (x_2right )right }$. As the function is increasing in the negative x-axis and it is decreasing in the positive x-axis.

Strongly Quasiconvex Function

Let $f:Srightarrow mathbb{R}^n$ and S be a non-empty convex set in $mathbb{R}^n$ then f is strongly quasiconvex function if for any $x_1,x_2 in S$ with $left ( x_1 right ) neq left ( x_2 right )$, we have $fleft ( lambda x_1+left ( 1-lambda right )x_2 right )

Theorem

A quasiconvex function $f:Srightarrow mathbb{R}^n$ on a non-empty convex set S in $mathbb{R}^n$ is strongly quasiconvex function if it is not constant on a line segment joining any points of S.

Proof

Let f is quasiconvex function and it is not constant on a line segment joining any points of S.

Suppose f is not strongly quasiconvex function.

There exist $x_1,x_2 in S$ with $x_1 neq x_2$ such that

$$fleft ( z right )geq maxleft { fleft ( x_1 right ), fleft ( x_2 right ) right }, forall z= lambda x_1+left ( 1-lambda right )x_2, lambda in left ( 0,1 right )$$

$Rightarrow fleft ( x_1 right )leq fleft ( z right )$ and $fleft ( x_2 right )leq fleft ( z right )$

Since f is not constant in $left [ x_1,z right ]$ and $left [z,x_2 right ] $

So there exists $u in left [ x_1,z right ]$ and $v=left [ z,x_2 right ]$

$$Rightarrow u= mu_1x_1+left ( 1-mu_1right )z,v=mu_2z+left ( 1- mu_2right )x_2$$

Since f is quasiconvex,

$$Rightarrow fleft ( u right )leq maxleft { fleft ( x_1 right ),f left ( z right ) right }=fleft ( z right ):: and ::f left ( v right ) leq max left { fleft ( z right ),fleft ( x_2 right ) right }$$

$$Rightarrow fleft ( u right )leq fleft ( z right ) :: and :: fleft ( v right )leq fleft ( z right )$$

$$Rightarrow max left { fleft ( u right ),fleft ( v right ) right } leq fleft ( z right )$$

But z is any point between u and v, if any of them are equal, then f is constant.

Therefore, $max left { fleft ( u right ),fleft ( v right ) right } leq fleft ( z right )$

which contradicts the quasiconvexity of f as $z in left [ u,v right ]$.

Hence f is strongly quasiconvex function.

Theorem

Let $f:Srightarrow mathbb{R}^n$ and S be a non-empty convex set in $mathbb{R}^n$. If $hat{x}$ is local optimal solution, then $hat{x}$ is unique global optimal solution.

Proof

Since a strong quasiconvex function is also strictly quasiconvex function, thus a local optimal solution is global optimal solution.

Uniqueness − Let f attains global optimal solution at two points $u,v in S$

$$Rightarrow fleft ( u right ) leq fleft ( x right ).forall x in S:: and ::fleft ( v right ) leq fleft ( x right ).forall x in S$$

If u is global optimal solution, $fleft ( u right )leq fleft ( v right )$ and $fleft ( v right )leq fleft ( uright )Rightarrow fleft ( u right )=fleft ( vright )$

$$fleft ( lambda u+left ( 1-lambdaright )vright )

which is a contradiction.

Hence there exists only one global optimal solution.

Remarks

- A strongly quasiconvex function is also strictly quasiconvex fucntion.

- A strictly convex function may or may not be strongly quasiconvex.

- A differentiable strictly convex is strongly quasiconvex.

Pseudoconvex Function

Let $f:Srightarrow mathbb{R}$ be a differentiable function and S be a non-empty convex set in $mathbb{R}^n$, then f is said to be pseudoconvex if for each $x_1,x_2 in S$ with $bigtriangledown fleft ( x_1 right )^Tleft ( x_2-x_1 right )geq 0$, we have $fleft ( x_2 right )geq fleft ( x_1 right )$, or equivalently if $fleft ( x_1 right )>fleft ( x_2 right )$ then $bigtriangledown fleft ( x_1 right )^Tleft ( x_2-x_1 right )

Pseudoconcave function

Let $f:Srightarrow mathbb{R}$ be a differentiable function and S be a non-empty convex set in $mathbb{R}^n$, then f is said to be pseudoconvex if for each $x_1, x_2 in S$ with $bigtriangledown fleft ( x_1 right )^Tleft ( x_2-x_1 right )geq 0$, we have $fleft ( x_2 right )leq fleft ( x_1 right )$, or equivalently if $fleft ( x_1 right )>fleft ( x_2 right )$ then $bigtriangledown fleft ( x_1 right )^Tleft ( x_2-x_1 right )>0$

Remarks

-

If a function is both pseudoconvex and pseudoconcave, then is is called pseudolinear.

-

A differentiable convex function is also pseudoconvex.

-

A pseudoconvex function may not be convex. For example,

$fleft ( x right )=x+x^3$ is not convex. If $x_1 leq x_2,x_{1}^{3} leq x_{2}^{3}$

Thus,$bigtriangledown fleft ( x_1 right )^Tleft ( x_2-x_1 right )=left ( 1+3x_{1}^{2} right )left ( x_2-x_1 right ) geq 0$

And, $fleft ( x_2 right )-fleft ( x_1 right )=left ( x_2-x_1 right )+left ( x_{2}^{3} -x_{1}^{3}right )geq 0$

$Rightarrow fleft ( x_2 right )geq fleft ( x_1 right )$

Thus, it is pseudoconvex.

A pseudoconvex function is strictly quasiconvex. Thus, every local minima of pseudoconvex is also global minima.

Strictly pseudoconvex function

Let $f:Srightarrow mathbb{R}$ be a differentiable function and S be a non-empty convex set in $mathbb{R}^n$, then f is said to be pseudoconvex if for each $x_1,x_2 in S$ with $bigtriangledown fleft ( x_1 right )^Tleft ( x_2-x_1 right )geq 0$, we have $fleft ( x_2 right )> fleft ( x_1 right )$,or equivalently if $fleft ( x_1 right )geq fleft ( x_2 right )$ then $bigtriangledown fleft ( x_1 right )^Tleft ( x_2-x_1 right )

Theorem

Let f be a pseudoconvex function and suppose $bigtriangledown fleft ( hat{x}right )=0$ for some $hat{x} in S$, then $hat{x}$ is global optimal solution of f over S.

Proof

Let $hat{x}$ be a critical point of f, ie, $bigtriangledown fleft ( hat{x}right )=0$

Since f is pseudoconvex function, for $x in S,$ we have

$$bigtriangledown fleft ( hat{x}right )left ( x-hat{x}right )=0 Rightarrow fleft ( hat{x}right )leq fleft ( xright ), forall x in S$$

Hence, $hat{x}$ is global optimal solution.

Remark

If f is strictly pseudoconvex function, $hat{x}$ is unique global optimal solution.

Theorem

If f is differentiable pseudoconvex function over S, then f is both strictly quasiconvex as well as quasiconvex function.

Remarks

-

The sum of two pseudoconvex fucntions defined on an open set S of $mathbb{R}^n$ may not be pseudoconvex.

-

Let $f:Srightarrow mathbb{R}$ be a quasiconvex function and S be a non-empty convex subset of $mathbb{R}^n$ then f is pseudoconvex if and only if every critical point is a global minima of f over S.

-

Let S be a non-empty convex subset of $mathbb{R}^n$ and $f:Srightarrow mathbb{R}$ be a function such that $bigtriangledown fleft ( xright )neq 0$ for every $x in S$ then f is pseudoconvex if and only if it is a quasiconvex function.

Convex Optimization – Programming Problem

There are four types of convex programming problems −

Step 1 − $min :fleft ( x right )$, where $x in S$ and S be a non-empty convex set in $mathbb{R}^n$ and $fleft ( x right )$ is convex function.

Step 2 − $min : fleft ( x right ), x in mathbb{R}^n$ subject to

$g_ileft ( x right ) geq 0, 1 leq m_1$ and $g_ileft ( x right )$ is a convex function.

$g_ileft ( x right ) leq 0,m_1+1 leq m_2$ and $g_ileft ( x right )$ is a concave function.

$g_ileft ( x right ) = 0, m_2+1 leq m$ and $g_ileft ( x right )$ is a linear function.

where $fleft ( x right )$ is a convex fucntion.

Step 3 − $max :fleft ( x right )$ where $x in S$ and S be a non-empty convex set in $mathbb{R}^n$ and $fleft ( x right )$ is concave function.

Step 4 − $min :fleft ( x right )$, where $x in mathbb{R}^n$ subject to

$g_ileft ( x right ) geq 0, 1 leq m_1$ and $g_ileft ( x right )$ is a convex function.

$g_ileft ( x right ) leq 0, m_1+1 leq m_2$ and $g_ileft ( x right )$ is a concave function.

$g_ileft ( x right ) = 0,m_2+1 leq m$ and $g_ileft ( x right )$ is a linear function.

where $fleft ( x right )$ is a concave function.

Cone of feasible direction

Let S be a non-empty set in $mathbb{R}^n$ and let $hat{x} in :Closureleft ( S right )$, then the cone of feasible direction of S at $hat{x}$, denoted by D, is defined as $D=left { d:dneq 0,hat{x}+lambda d in S, lambda in left ( 0, delta right ), delta > 0 right }$

Each non-zero vector $d in D$ is called feasible direction.

For a given function $f:mathbb{R}^n Rightarrow mathbb{R}$ the cone of improving direction at $hat{x}$ is denoted by F and is given by

$$F=left { d:fleft ( hat{x}+lambda d right )leq fleft ( hat{x} right ),forall lambda in left ( 0,delta right ), delta >0 right }$$

Each direction $d in F$ is called an improving direction or descent direction of f at $hat{x}$

Theorem

Necessary Condition

Consider the problem $min fleft ( x right )$ such that $x in S$ where S be a non-empty set in $mathbb{R}^n$. Suppose f is differentiable at a point $hat{x} in S$. If $hat{x}$ is a local optimal solution, then $F_0 cap D= phi$ where $F_0=left { d:bigtriangledown fleft ( hat{x} right )^T d

Sufficient Condition

If $F_0 cap D= phi$ f is a pseudoconvex function at $hat{x}$ and there exists a neighbourhood of $hat{x},N_varepsilon left ( hat{x} right ), varepsilon > 0$ such that $d=x-hat{x}in D$ for any $x in S cap N_varepsilon left ( hat{x} right )$, then $hat{x}$ is local optimal solution.

Proof

Necessary Condition

Let $F_0 cap Dneq phi$, ie, there exists a $d in F_0 cap D$ such that $d in F_0$ and $din D$

Since $d in D$, therefore there exists $delta_1 >0$ such that $hat{x}+lambda d in S, lambda in left ( 0,delta_1 right ).$

Since $d in F_0$, therefore $bigtriangledown f left ( hat{x}right )^T d

Thus, there exists $delta_2>0$ such that $fleft ( hat{x}+lambda dright )

Let $delta=min left {delta_1,delta_2 right }$

Then $hat{x}+lambda d in S, fleft (hat{x}+lambda d right )

But $hat{x}$ is local optimal solution.

Hence it is contradiction.

Thus $F_0cap D=phi$

Sufficient Condition

Let $F_0 cap Dneq phi$ nd let f be a pseudoconvex function.

Let there exists a neighbourhood of $hat{x}, N_{varepsilon}left ( hat{x} right )$ such that $d=x-hat{x}, forall x in S cap N_varepsilonleft ( hat{x} right )$

Let $hat{x}$ is not a local optimal solution.

Thus, there exists $bar{x} in S cap N_varepsilon left ( hat{x} right )$ such that $f left ( bar{x} right )

By assumption on $S cap N_varepsilon left ( hat{x} right ),d=left ( bar{x}-hat{x} right )in D$

By pseudoconvex of f,

$$fleft ( hat{x} right )>fleft ( bar{x} right )Rightarrow bigtriangledown fleft ( hat{x} right )^Tleft ( bar{x}-hat{x} right )

$Rightarrow bigtriangledown fleft ( hat{x} right) ^T d

$Rightarrow d in F_0$

hence $F_0cap D neq phi$

which is a contradiction.

Hence, $hat{x}$ is local optimal solution.

Consider the following problem:$min :fleft ( xright )$ where $x in X$ such that $g_xleft ( xright ) leq 0, i=1,2,…,m$

$f:X rightarrow mathbb{R},g_i:X rightarrow mathbb{R}^n$ and X is an open set in $mathbb{R}^n$

Let $S=left {x:g_ileft ( xright )leq 0,forall i right }$

Let $hat{x} in X$, then $M=left {1,2,…,m right }$

Let $I=left {i:g_ileft ( hat{x}right )=0, i in Mright }$ where I is called index set for all active constraints at $hat{x}$

Let $J=left {i:g_ileft ( hat{x}right )

Let $F_0=left { d in mathbb{R}^m:bigtriangledown fleft ( hat{x} right )^T d

Let $G_0=left { d in mathbb{R}^m:bigtriangledown gIleft ( hat{x} right )^T d

or $G_0=left { d in mathbb{R}^m:bigtriangledown gileft ( hat{x} right )^T d

Lemma

If $S=left { x in X:g_ileft ( xright ) leq 0, forall i in Iright }$ and X is non-empty open set in $mathbb{R}^n$. Let $hat{x}in S$ and $g_i$ are different at $hat{x}, i in I$ and let $g_i$ where $i in J$ are continuous at $hat{x}$, then $G_0 subseteq D$.

Proof

Let $d in G_0$

Since $hat{x} in X$ and X is an open set, thus there exists $delta_1 >0$ such that $hat{x}+lambda d in X$ for $lambda in left ( 0, delta_1right )$

Also since $g_hat{x}0$, $g_ileft ( hat{x}+lambda dright )

Since $d in G_0$, therefore, $bigtriangledown g_ileft ( hat{x}right )^T d 0, g_ileft ( hat{x}+lambda dright )

Let $delta=minleft { delta_1, delta_2, delta_3 right }$

therefore, $hat{x}+lambda d in X, g_ileft ( hat{x}+lambda dright )

$Rightarrow hat{x}+lambda d in S$

$Rightarrow d in D$

$Rightarrow G_0 subseteq D$

Hence Proved.

Theorem

Necessary Condition